1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

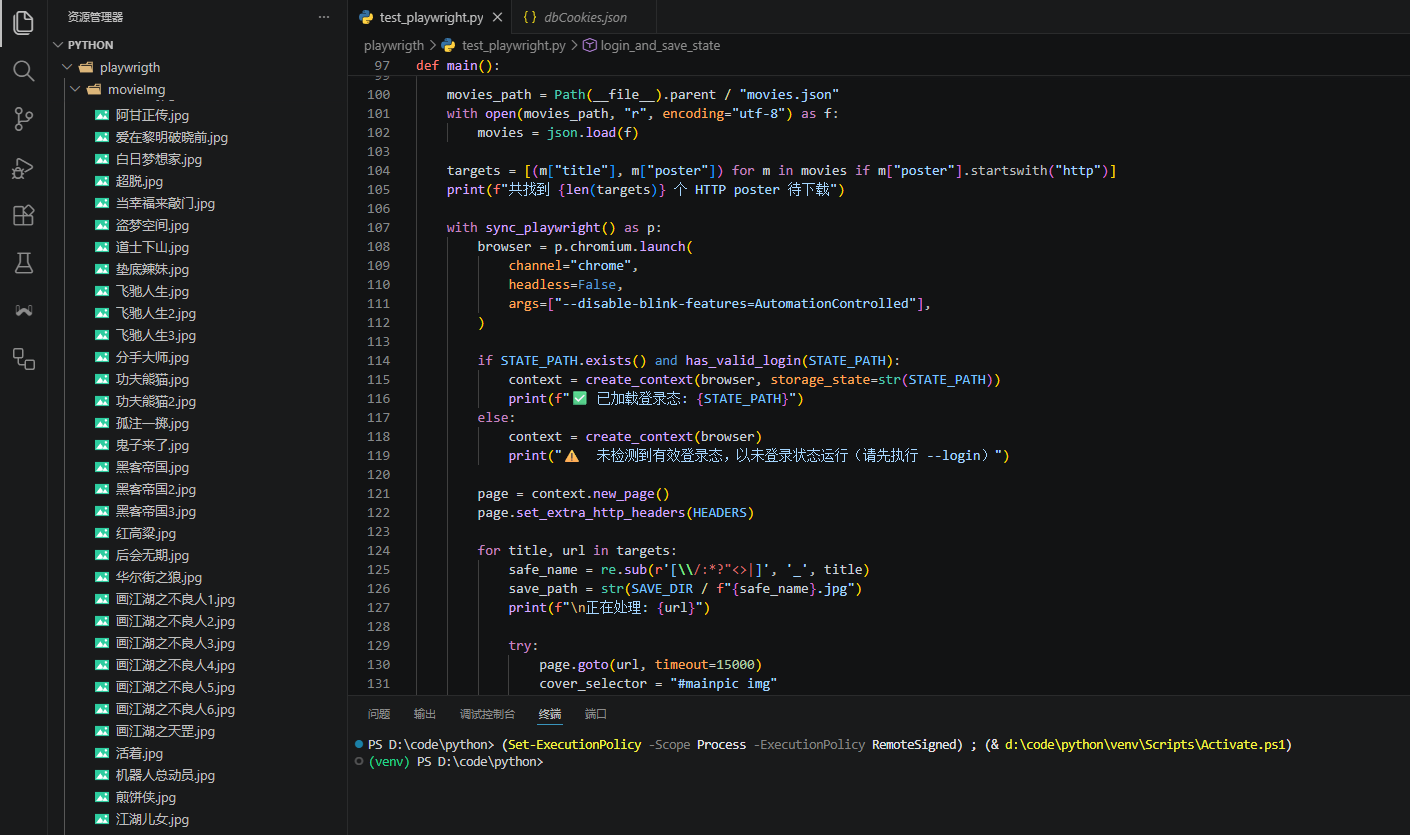

| import json

import random

import re

import sys

import time

from pathlib import Path

from playwright.sync_api import sync_playwright

SAVE_DIR = Path(__file__).parent / "movieImg"

STATE_PATH = Path(__file__).parent / "dbCookies.json"

HEADERS = {

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/147.0.0.0 Safari/537.36",

"Accept-Language": "zh-CN,zh;q=0.9,en;q=0.8",

"Referer": "https://movie.douban.com/",

}

STEALTH_SCRIPT = """

// 隐藏 webdriver 特征

Object.defineProperty(navigator, 'webdriver', { get: () => undefined });

// 补全 plugins 数组

Object.defineProperty(navigator, 'plugins', {

get: () => [

{ name: 'Chrome PDF Plugin', filename: 'internal-pdf-viewer' },

{ name: 'Chrome PDF Viewer', filename: 'mhjfbmdgcfjbbpaeojofohoefgiehjai' },

{ name: 'Native Client', filename: 'internal-nacl-plugin' },

],

});

// 补全 languages

Object.defineProperty(navigator, 'languages', { get: () => ['zh-CN', 'zh'] });

// 伪造 chrome 对象

window.chrome = window.chrome || {};

window.chrome.runtime = {};

// 覆盖 permissions

const originalQuery = window.navigator.permissions.query;

window.navigator.permissions.query = (params) =>

params.name === 'notifications'

? Promise.resolve({ state: 'denied' })

: originalQuery(params);

"""

def create_context(browser, storage_state=None):

"""创建带反检测措施的 browser context"""

context = browser.new_context(

viewport={"width": 1920, "height": 1080},

storage_state=storage_state,

)

context.add_init_script(STEALTH_SCRIPT)

return context

def has_valid_login(path):

"""检查 storageState 是否包含有效的豆瓣登录态"""

try:

with open(path, "r", encoding="utf-8") as f:

data = json.load(f)

except (json.JSONDecodeError, FileNotFoundError):

return False

for c in data.get("cookies", []):

if "douban.com" in c.get("domain", "") and c.get("name") == "dbcl2":

return True

return False

def login_and_save_state():

"""打开豆瓣登录页,等待用户手动登录后保存 storageState"""

print("请在打开的浏览器中手动登录豆瓣...")

with sync_playwright() as p:

browser = p.chromium.launch(

channel="chrome",

headless=False,

args=["--disable-blink-features=AutomationControlled"],

)

context = create_context(browser)

page = context.new_page()

try:

page.goto("https://accounts.douban.com/passport/login", timeout=30000)

page.wait_for_load_state("networkidle")

print("登录完成后,按 Enter 键继续...")

input()

context.storage_state(path=str(STATE_PATH))

print(f"✅ storageState 已保存至: {STATE_PATH}")

except Exception as e:

print(f"❌ 登录流程出错: {e}")

finally:

browser.close()

def main():

SAVE_DIR.mkdir(parents=True, exist_ok=True)

movies_path = Path(__file__).parent / "movies.json"

with open(movies_path, "r", encoding="utf-8") as f:

movies = json.load(f)

targets = [(m["title"], m["poster"]) for m in movies if m["poster"].startswith("http")]

print(f"共找到 {len(targets)} 个 HTTP poster 待下载")

with sync_playwright() as p:

browser = p.chromium.launch(

channel="chrome",

headless=False,

args=["--disable-blink-features=AutomationControlled"],

)

if STATE_PATH.exists() and has_valid_login(STATE_PATH):

context = create_context(browser, storage_state=str(STATE_PATH))

print(f"✅ 已加载登录态: {STATE_PATH}")

else:

context = create_context(browser)

print("⚠️ 未检测到有效登录态,以未登录状态运行(请先执行 --login)")

page = context.new_page()

page.set_extra_http_headers(HEADERS)

for title, url in targets:

safe_name = re.sub(r'[\\/:*?"<>|]', '_', title)

save_path = str(SAVE_DIR / f"{safe_name}.jpg")

print(f"\n正在处理: {url}")

try:

page.goto(url, timeout=15000)

cover_selector = "#mainpic img"

page.wait_for_selector(cover_selector, timeout=10000)

cover_img = page.query_selector(cover_selector)

img_src = cover_img.get_attribute("src")

print(f"找到图片 URL: {img_src}")

img_data = context.request.get(img_src, headers={"Referer": "https://movie.douban.com/"}).body()

with open(save_path, "wb") as f:

f.write(img_data)

print(f"封面已保存至: {save_path}")

except Exception as e:

print(f"下载失败 [{url}]: {e}")

delay = random.uniform(1, 3)

print(f"等待 {delay:.1f} 秒...")

time.sleep(delay)

browser.close()

if __name__ == "__main__":

if "--login" in sys.argv:

login_and_save_state()

else:

main()

|